A critical flaw in the foundation of Anthropic's Model Context Protocol has been confirmed by researchers — and the vendor has explicitly declined to fix it. The vulnerability, rooted in how MCP's official SDK handles STDIO transport, enables remote code execution on any system running an affected implementation. With over 150 million downloads and more than 7,000 publicly accessible servers in scope, the blast radius is significant.

What Is MCP and Why Does It Matter?

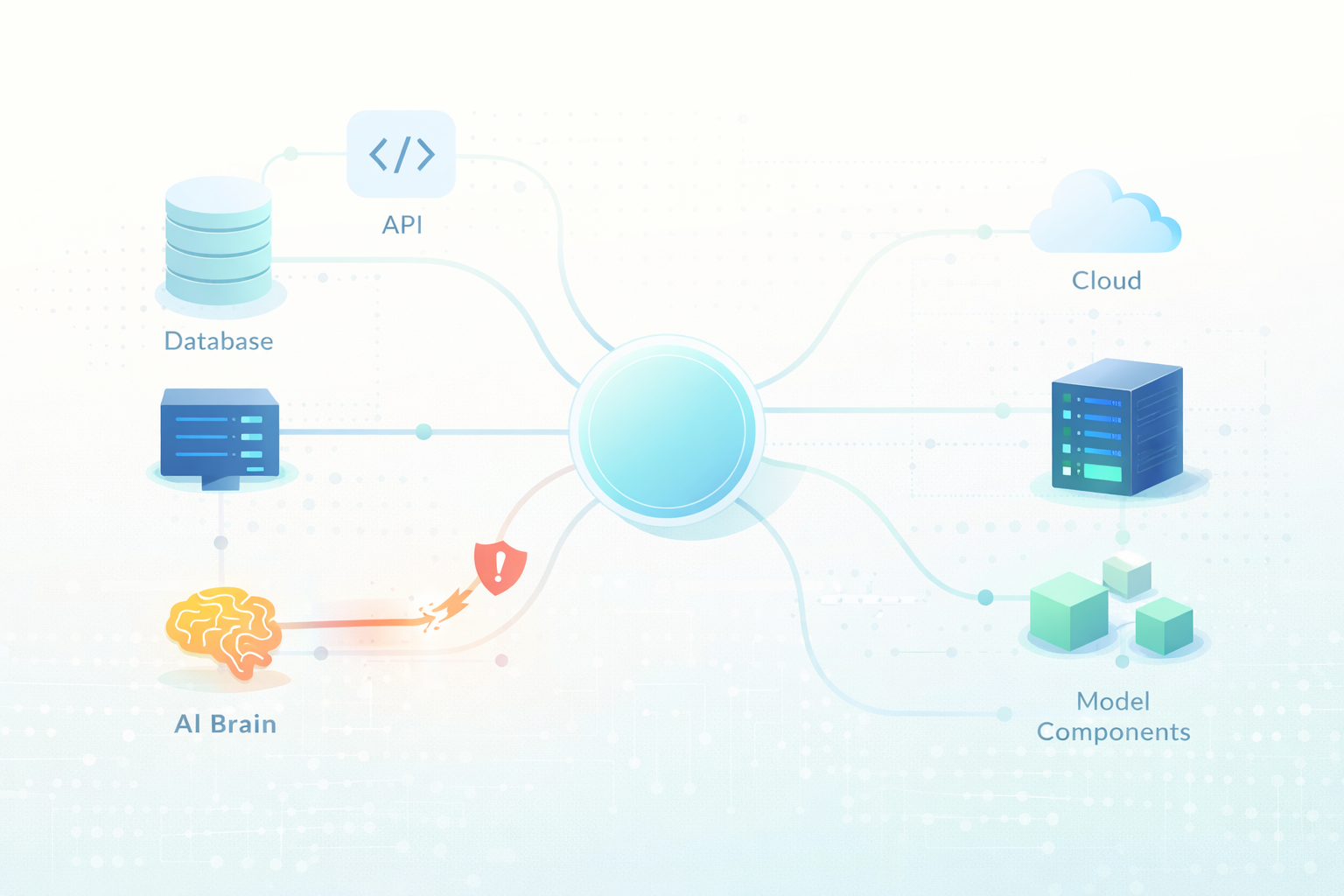

The Model Context Protocol (MCP) is an open standard developed by Anthropic that defines how AI models connect to external tools, APIs, databases, and data sources. Think of it as the USB specification for AI integrations — one protocol, many implementations. Developers use MCP to give AI assistants like Claude the ability to read files, call APIs, query databases, and run code.

Because of its central role in the AI tooling ecosystem, the MCP SDK has been adopted across a wide range of frameworks. LiteLLM, LangChain, LangFlow, Flowise, LettaAI, and LangBot all use it. That adoption is now the problem.

The Vulnerability: Arbitrary Command Execution by Design

Cybersecurity researchers at OX Security — Moshe Siman Tov Bustan, Mustafa Naamnih, Nir Zadok, and Roni Bar — published a detailed analysis revealing what they describe as a systemic architectural weakness in MCP's STDIO transport interface.

When MCP is configured to launch a server process over STDIO (standard input/output), the SDK passes configuration values directly as shell commands. There is no sanitization, validation, or sandbox by default. If an attacker can influence any part of that configuration — through a prompt injection attack, a malicious marketplace listing, or direct configuration edit — they can execute arbitrary operating system commands on the host machine.

The researchers found this behavior is consistent across every language the official SDK supports: Python, TypeScript, Java, and Rust. It is not a language-specific bug — it is a protocol-level design decision.

According to OX Security's analysis, the flaw gives attackers direct access to sensitive user data, internal databases, API keys, and chat histories on any vulnerable system.

Attack Vectors: Four Ways In

The research identified four distinct categories of attack enabled by this vulnerability:

- Unauthenticated and authenticated command injection via MCP STDIO — Attackers can inject OS commands through direct STDIO configuration without needing valid credentials in some cases.

- Command injection via direct STDIO configuration with hardening bypass — Even when basic hardening is applied, the bypass remains viable due to how the SDK processes input.

- Zero-click prompt injection triggering STDIO configuration edits — A crafted input to an LLM can silently modify MCP configuration, with no user interaction required.

- Command injection through MCP marketplaces via network requests — Malicious MCP server listings can embed hidden STDIO configurations that execute when installed or invoked.

This last vector is particularly concerning for teams that pull MCP servers from public registries without auditing them — a practice that is currently very common.

Affected Projects and CVE Assignments

The research resulted in eleven CVE assignments across major AI frameworks:

CVE-2025-65720— GPT ResearcherCVE-2026-30623— LiteLLM (Patched)CVE-2026-30624— Agent ZeroCVE-2026-30618— Fay FrameworkCVE-2026-33224— Bisheng (Patched)CVE-2026-30617— Langchain-ChatchatCVE-2026-33224— JaazCVE-2026-30625— UpsonicCVE-2026-30615— WindsurfCVE-2026-26015— DocsGPT (Patched)CVE-2026-40933— Flowise

Notably, this is not the first time MCP's STDIO interface has been flagged. Earlier, independent researchers disclosed CVE-2025-49596 affecting MCP Inspector, and similar issues were found in LibreChat (CVE-2026-22252), Cursor (CVE-2025-54136), and a community MCP server package (CVE-2025-54994). The pattern of independent rediscovery is itself a signal that the root cause is structural, not incidental.

Anthropic's Position: Expected Behavior

What makes this situation unusual is Anthropic's response. According to the OX Security report, Anthropic reviewed the findings and declined to change the protocol's architecture, describing the STDIO command execution behavior as expected. The rationale: the STDIO interface is designed to launch a local server process and hand back a handle to the LLM. The fact that it also executes arbitrary commands when given non-server inputs is a side effect of that design — not something Anthropic considers a defect in need of remediation.

This shifts the burden to every developer building on MCP. If you use the official SDK without additional hardening, you inherit these risks by default.

What Teams Should Do Now

Regardless of where the remediation responsibility sits, teams running MCP-based applications need to act. The following measures reduce exposure:

- Block public IP access to any service that runs or accepts MCP configuration. This limits who can interact with the STDIO interface in the first place.

- Run MCP servers in sandboxed environments — containers or VMs with restricted system call access reduce the damage radius of a successful injection.

- Treat MCP configuration input as untrusted. Validate and sanitize any values that feed into STDIO configuration, especially if they originate from user input, external APIs, or AI-generated content.

- Audit your MCP server dependencies. Only use servers from sources you control or have independently reviewed. Public MCP marketplaces are an active attack vector.

- Monitor MCP tool invocations in production. Log every tool call with its parameters and flag anomalous patterns — unexpected system commands, unusual data access paths, or configuration changes.

- Apply available vendor patches immediately. LiteLLM, Bisheng, and DocsGPT have issued fixes. Check your framework's changelog and update.

The Supply Chain Lesson

What makes this a supply chain event rather than a single vulnerability is the propagation model. One architectural decision in a reference SDK cascaded silently into every language binding, every downstream framework, and every application built on top. Developers who trusted the protocol to handle security safely inherited a code execution primitive they may not have known existed.

This is not a new pattern in software security. It mirrors how a single weak cryptographic default in a widely-adopted library can compromise an entire generation of applications. The difference here is the speed of adoption — the AI tooling ecosystem moves much faster than traditional software supply chains, and scrutiny often lags adoption by months or years.

For development teams building AI-powered products, this is a clear signal: treat AI framework dependencies with the same rigor you would apply to any third-party library handling sensitive operations. Review the security model of every protocol you adopt, not just the feature set.

Sources: The Hacker News — "Anthropic MCP Design Vulnerability Enables RCE, Threatening AI Supply Chain" (April 20, 2026); OX Security — "The Mother of All AI Supply Chains: Critical, Systemic Vulnerability at the Core of Anthropic's MCP" (published by Moshe Siman Tov Bustan, Mustafa Naamnih, Nir Zadok, and Roni Bar). All CVE identifiers cited as reported in source material. Anthropic's position cited as reported by OX Security researchers.